Article

I built my own analytics with the help of AI – cookie-free and GDPR-compliant

Not long ago, I realised I wanted a better understanding of my blog’s traffic.

I like clean and accurate data. But traditional analytics tools like Google Analytics come with serious downsides: inaccurate numbers (due to blocked consent requests and various tracking blockers), the need for a cookie banner, and questionable transparency around privacy.

That is why I decided to build my own analytics – with pure, unfiltered data and full respect for the privacy of my visitors. And since we are living in 2025, I built it with the help of generative AI.

When it comes to programming, I have always considered myself an eternal beginner, ever since I started with web development at the age of 12. But AI has opened up skills and opportunities for me that I never imagined I would have. I have already written here on my blog about how AI helps me improve my coding skills, explaining its steps and decisions along the way. Thanks to that, my horizons have expanded far beyond what I thought possible. One of the results is my own custom-built analytics tool.

Defining the Goals and First Prompts

When building a project like this today, you really need to have a clear idea of what you want it to do and why. Just saying "I want my own analytics" is not enough.

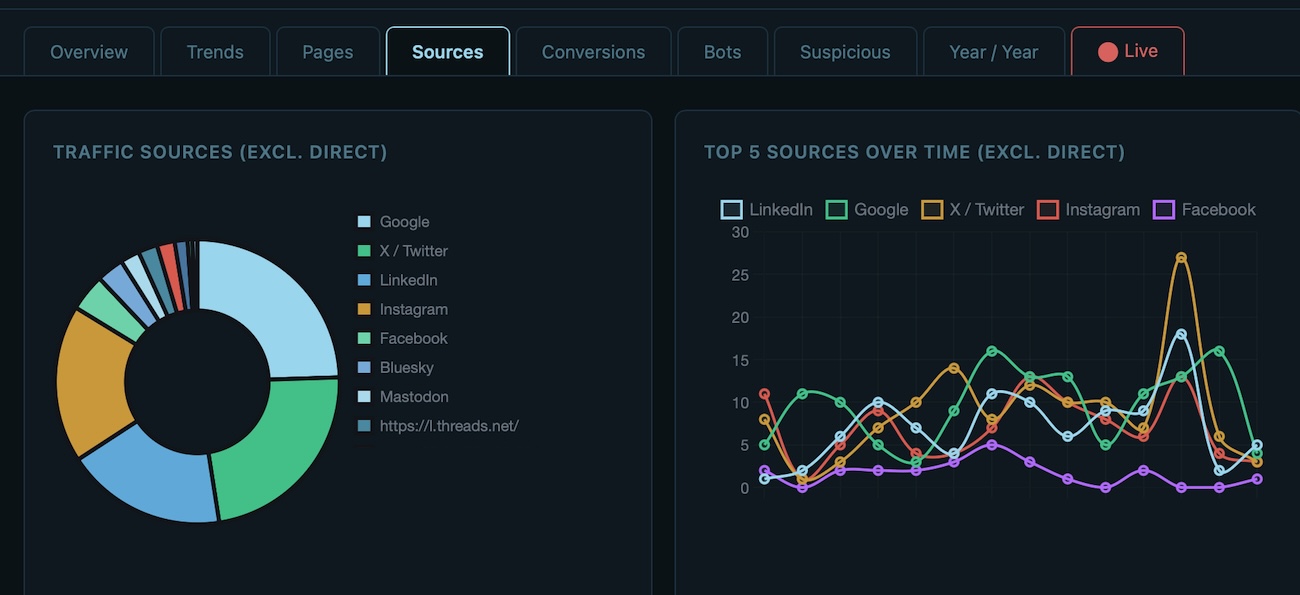

So we started by defining exactly what I wanted to measure: daily traffic, traffic sources, purchases of my premium articles, and a deeper analysis of visitor types (humans vs. suspicious activity vs. bots).

At first, creating the initial tables and charts seemed easy. But it did not take long before the first real challenges emerged.

The issues I had to solve

The first SQL queries we used were slow and inefficient. They dragged the whole website down. We gradually tuned them, replaced wasteful LIKE comparisons with exact matches, and optimised the logic to only process the data we really needed – and suddenly, it started to fly.

Join the Library

Full access to my thoughts, personal stories, findings, and what I learn from the people I meet.

Join the Library — €29.99 per yearGet the full article by email and feel free to reply if you want to discuss it further.

Summary

Common questions on this article's topic

Why is Google Analytics inaccurate?

Is it possible to build web analytics without cookies?

What percentage of web traffic comes from bots?

Can someone with limited programming experience build custom analytics using AI?

How does privacy-first analytics work without tracking individuals?

What were the biggest technical challenges in building custom analytics?

Related articles

This is a serious issue, and it is high time we start acting responsibly.

Four days in Catalonia. No computer, no AI, almost no social media. I bought this notebook so that I could write down what I would think about, and what I would come across and learn on the trip.

I am building an AI system to predict the S&P 500. It runs on my own machine, uses free public data — yfinance, FRED, the Shiller dataset — and grades every forecast against reality. This series documents the build itself: the decisions, the methodology, the mistakes. What I will eventually share from the running system is a separate question, and an honest one.

More articles

Prague, 13 May 2026. On my way to work I started thinking about something that stayed with me for days. If most routine work on a computer disappears in the next ten years, and a large share of repetitive manual work disappears with it, what happens to the flow of money? Who pays whom for what? Which economic layers will exist, how large will they be, and what relationships will run between them? This is the six-layer map I sketched as an answer.

Yesterday I could not tear myself away from the computer. When I lifted my head, it was half past eight in the evening. I had been sitting alone upstairs for about three hours.

Will AI take my job? A certified Google trainer told me in June 2024 that my profession would cease to exist. Twenty-two months later, my job title has not changed — but ninety percent of what I do during the day is different. I have delegated more of my thinking to AI agents than I thought possible. I am not afraid. This is why, and what it means for anyone asking the same question.

One hour. Fifty-five minutes. That is how long it took to build what a Czech software firm had quoted at over €50,000. I built it with Claude Code. Not a prototype. Not a proof of concept. A working tool — the one the company actually needed. By the evening of the same day, it was running on staging. This is not about Claude Code. It is about what Claude Code exposes.

I have conducted roughly one hundred and fifty practical interviews over the past four years. Fifty for data specialist roles. A hundred for advertising and performance marketing specialists. Almost every one of them involved sitting down with a candidate over a practical task — something close to a real problem we actually need to solve at the company. Not theory. Not trivia. Applied problem-solving. Over time, I started noticing a pattern.

Before you can teach AI to understand anything, you need to see what it is hiding from you.

The moment other people needed access to it, the problem changed completely. It was no longer about whether the agent could learn. It was about who gets to teach it.

I wanted to build an agent that doesn't just assist. One that acts.

This is what I learned about local vs cloud AI, and why I switched to Claude Code.