Article

Building an AI Stock Market Prediction System That Grades Itself

In July 2024 I wrote about the changing moods of the stock market. I described tracking valuation ratios alongside media narratives as a mood thermometer. That article ended with a line I have come back to often: the stock market is a reflection of collective human emotions and behaviours.

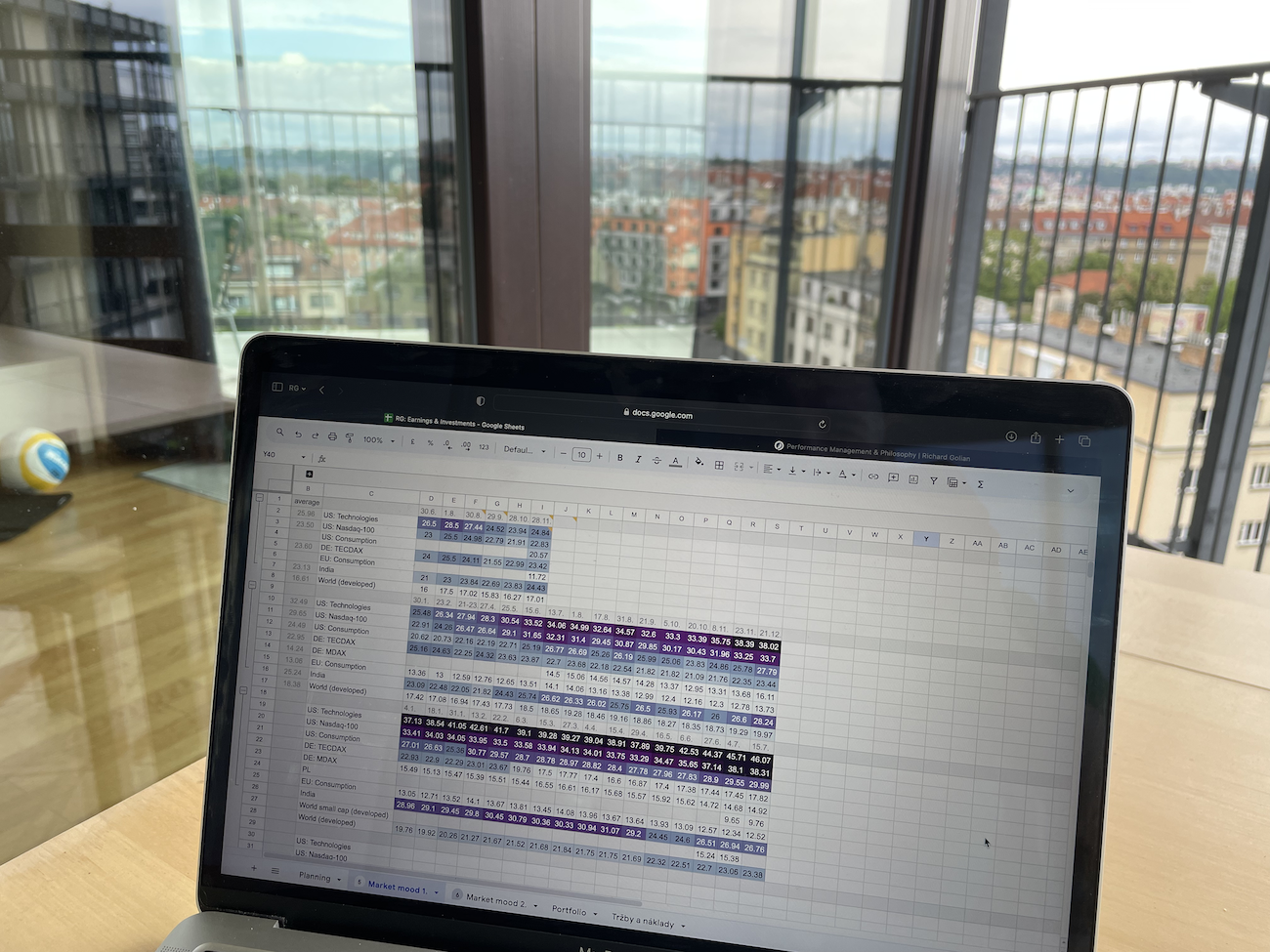

Two years later, that thermometer still lives in a Google spreadsheet — valuation ratios in columns, my own commentary on what the financial press was saying alongside them. It works for me. It does not work for anyone else. And more importantly, it cannot be tested. I cannot point to a calibrated record of how often my readings have been right, how often they have been wrong, or whether I am better than a coin flip when I claim to see elevated valuation.

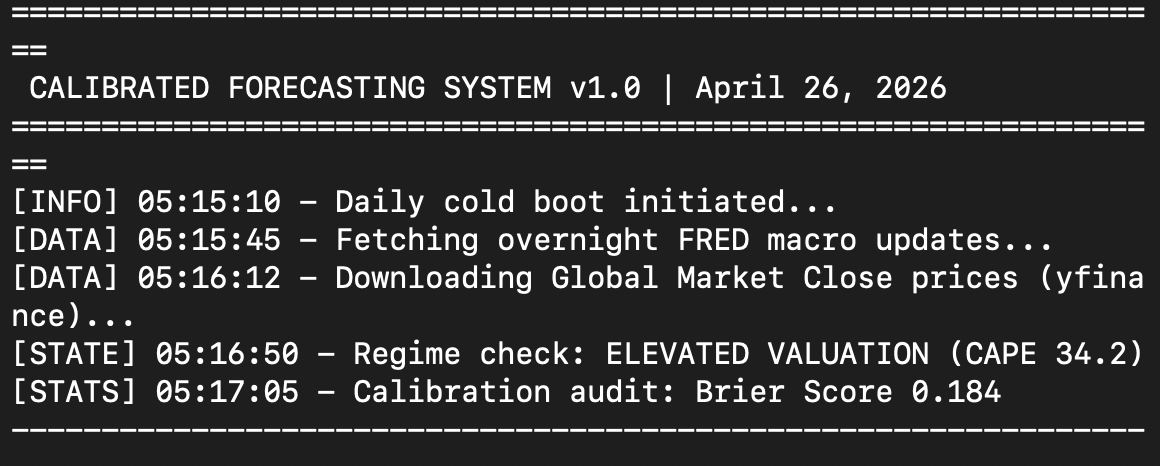

So I decided to build something. I have been at it since five this morning. I am writing this at eight. The first version is now running on my own machine. The pipeline works end to end. It does not yet have enough graded predictions in it to tell me anything meaningful — that part is just beginning. This article is the first in a series that documents the build itself, and what the system tells me once the record starts to fill up.

WHAT IS A CALIBRATED PREDICTION SYSTEM BUILT ON?

Three older articles converge into the design of what I have built. Each is a separate idea. Together they form the spine.

The first is risk-reward asymmetry. Every prediction the system emits comes with explicit probabilities and a confidence number. It has to answer the question I keep asking myself out loud. If I am wrong, how much do I lose? If I am right, how much do I gain? And is the ratio in my favour?

The second is decision quality over decision outcome. It runs through both Decision-Making in Marketing and Advertising Under Uncertainty and I make mistake after mistake. The primary metric is not hit-rate. It is calibration error. When the system says 70 per cent, does the world deliver 70 per cent? A predictor that says 95 per cent and is right 80 per cent of the time is more dangerous than one that says 70 per cent and is right 70 per cent of the time. The build enforces this in its own UI. Hit-rate is never reported without calibration error next to it. The numbers will only become meaningful once the record has enough graded predictions in it. A later article in this series will go into how the comparison is computed.

The third is the mood thermometer. I described it as my way of reading the market — partly through how expensive it was against its own history, and partly through how the financial press was talking about it. I returned to both halves of it later, in The Stock Market Hums with Hope and Do you know what CAPE is?. In the first phase of the build, the system formalises only the valuation half. It computes the CAPE percentile against the full distribution since 1871. It classifies the market into one of eighteen regimes. Every smart prediction is conditioned on the regime it was made in. The narrative half stays in the spreadsheet, for now.

WHAT THE FIRST VERSION ACTUALLY DOES

Daily, on my own machine, the build ingests S&P 500 OHLCV data, FRED macro indicators, and the Shiller CAPE series. It also pulls valuation fundamentals from yfinance.

It then computes valuation features. Trailing and forward P/E. Price to book. Dividend yield. CAPE percentile against the long historical distribution. From those features it labels today's regime, choosing one of eighteen. Five examples: low-volatility uptrend, high-volatility correction, range-bound, elevated valuation, or cyclical trough.

It then emits predictions for the S&P 500 across six horizons, from one day to twelve months. Each prediction is a probability distribution with a calibrated confidence number attached. It is not a single number.

Each prediction is graded at its review date. The record is never edited. The system judges itself by aggregates, not single hits. A minimum sample of thirty predictions per metric is required before any number is considered meaningful. The record began today. The interesting part of this series begins once it stops being small.

Join the Library

Full access to my thoughts, personal stories, findings, and what I learn from the people I meet.

Join the Library — €29.99 per yearGet the full article by email and feel free to reply if you want to discuss it further.

Disclaimer

Sources

Summary

Common questions on this article's topic

Can AI predict the stock market?

What does it mean to calibrate a prediction system?

Why is hit-rate a misleading metric for stock forecasts?

What is the CAPE ratio and why does it matter for S&P 500 forecasting?

Can a stock market forecasting system run locally without cloud services?

How do you keep a forecasting track record honest?

Related articles

The price of a stock index travels as one number, and the only conversation about it is whether it is going up or down. The system I have been building takes that number apart. For any past day, it breaks the closing price into a small set of components that sum exactly back to it. Each answers a different question — how much is the value on paper, the earnings premium, the broader economy, the media narrative, the unexplained?

Prague, 13 May 2026. On my way to work I started thinking about something that stayed with me for days. If most routine work on a computer disappears in the next ten years, and a large share of repetitive manual work disappears with it, what happens to the flow of money? Who pays whom for what? Which economic layers will exist, how large will they be, and what relationships will run between them? This is the six-layer map I sketched as an answer.

Yesterday I could not tear myself away from the computer. When I lifted my head, it was half past eight in the evening. I had been sitting alone upstairs for about three hours.

More articles

Will AI take my job? A certified Google trainer told me in June 2024 that my profession would cease to exist. Twenty-two months later, my job title has not changed — but ninety percent of what I do during the day is different. I have delegated more of my thinking to AI agents than I thought possible. I am not afraid. This is why, and what it means for anyone asking the same question.

One hour. Fifty-five minutes. That is how long it took to build what a Czech software firm had quoted at over €50,000. I built it with Claude Code. Not a prototype. Not a proof of concept. A working tool — the one the company actually needed. By the evening of the same day, it was running on staging. This is not about Claude Code. It is about what Claude Code exposes.

I have conducted roughly one hundred and fifty practical interviews over the past four years. Fifty for data specialist roles. A hundred for advertising and performance marketing specialists. Almost every one of them involved sitting down with a candidate over a practical task — something close to a real problem we actually need to solve at the company. Not theory. Not trivia. Applied problem-solving. Over time, I started noticing a pattern.

Before you can teach AI to understand anything, you need to see what it is hiding from you.

The moment other people needed access to it, the problem changed completely. It was no longer about whether the agent could learn. It was about who gets to teach it.

I wanted to build an agent that doesn't just assist. One that acts.

This is what I learned about local vs cloud AI, and why I switched to Claude Code.

Four days in Catalonia. No computer, no AI, almost no social media. I bought this notebook so that I could write down what I would think about, and what I would come across and learn on the trip.